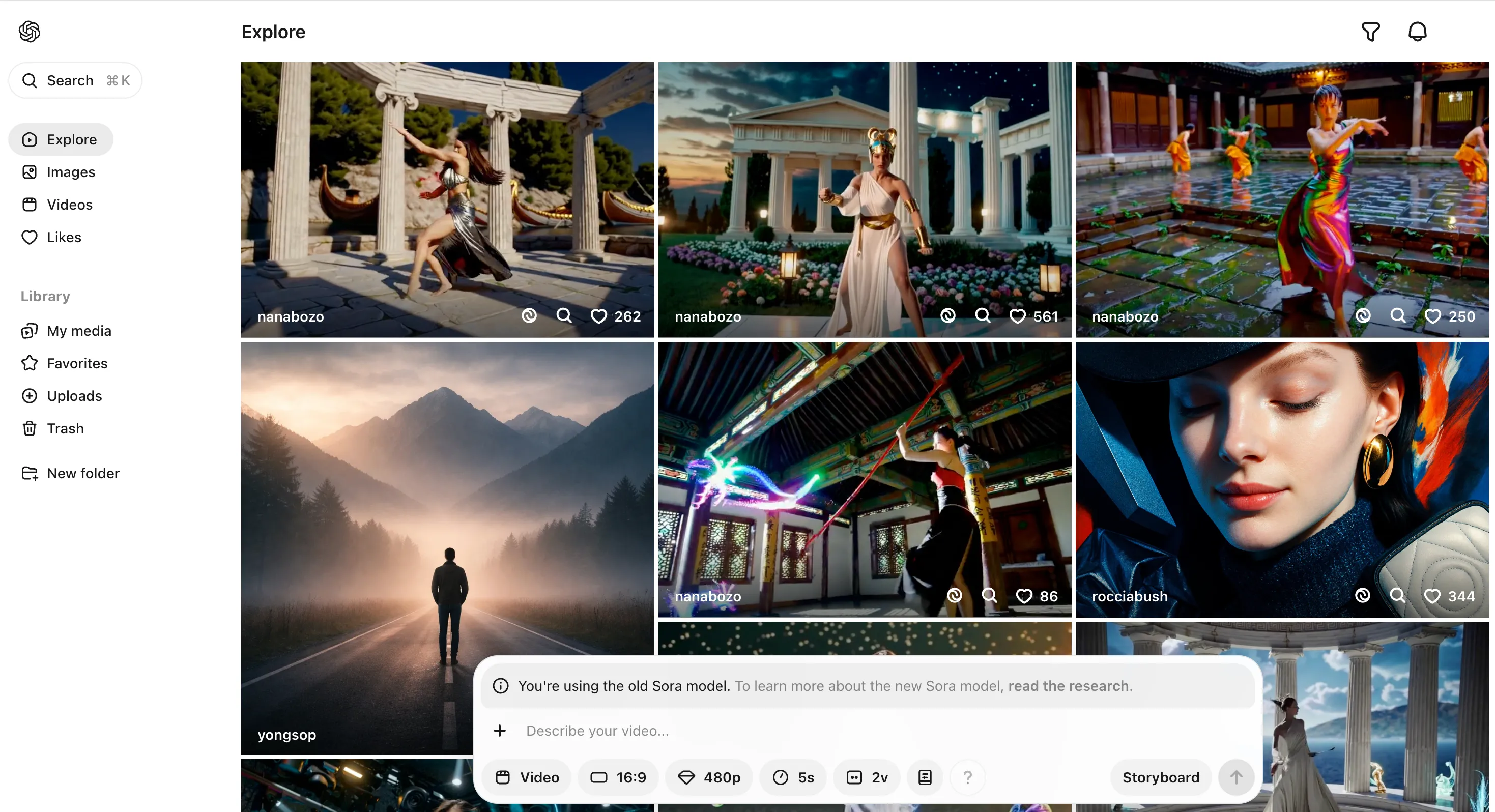

CapCut × Sora 2 × Veo 3.1: A Hands-On Comparison of the Top 3 AI Video Generators

TL;DR

CapCut now lets you run OpenAI Sora 2 and Google Veo 3.1 side-by-side within the same editing environment. Bottom line: Sora 2 produces more cinematic, film-like output; Veo 3.1 delivers more reliable 4K and handles audio sync automatically. Both feed directly into CapCut's timeline, making the generate → edit → export workflow genuinely end-to-end.

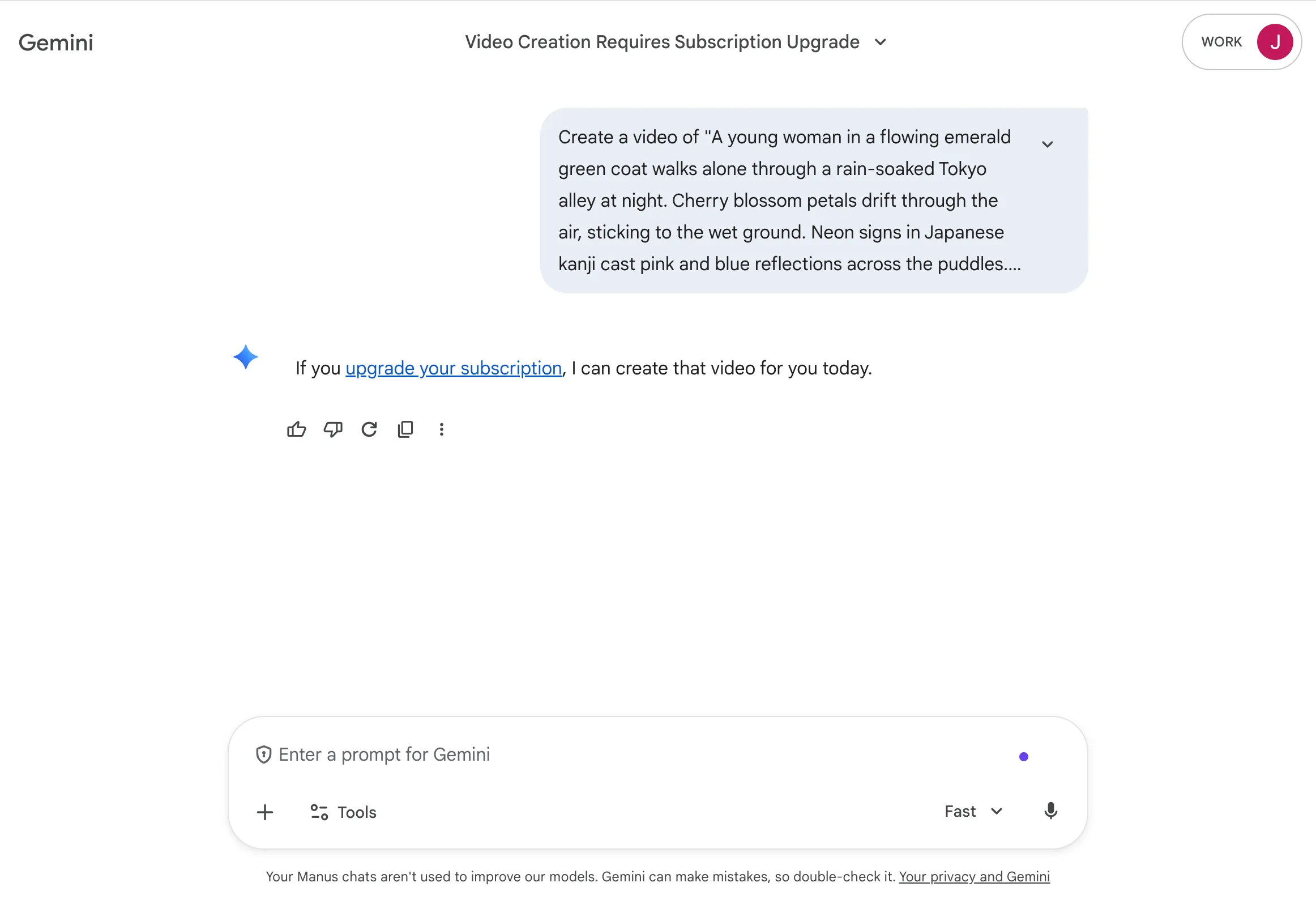

Source: Google Blog | Veo 3.1 video generation interface inside Google Flow

Why AI Video Generation Matters Right Now

Have you ever spent more than three hours in After Effects trying to produce a single video? I have. And honestly, by March 2026 standards, more than half of that time could have been handled by AI.

According to Zapier's 2026 AI Video Tool Report, over 18 AI video generators are competing in the market right now. The biggest shift: CapCut has integrated OpenAI's Sora 2 and Google's Veo 3.1 directly into its editing interface. Previously, you'd generate AI video in one tool, download it, then import it into a separate editor. That friction is gone.

MIT Technology Review's 2026 Breakthrough Technologies ranked generative AI as a key technology — and video generation is the segment moving fastest toward mainstream adoption.

Test Setup

Before diving in, here's how I ran the comparison:

- Device: MacBook Pro M3 Pro, 36GB RAM

- CapCut version: v5.2.0 (updated 2026-03-08)

- Sora 2: Connected via ChatGPT Plus ($20/month)

- Veo 3.1: Connected via Google AI Premium ($19.99/month)

- Test dates: March 10–11, 2026

- Network: Wired, 500 Mbps

I used three identical prompts across both models:

- Product video: "Espresso being extracted from a coffee machine, cinematic close-up, warm lighting"

- Landscape video: "Drone view over Seoul's Han River at sunset, 4K cinematic"

- Explainer video: "Code being typed on screen, dark theme, programming atmosphere"

Prompt 1: Product Video

Source: Manus AI Video Generator Comparison | OpenAI Sora 2 interface

Sora 2 Result

Sora 2 genuinely understands "cinematic." The coffee extraction shot had rising steam with visible detail, light reflecting off the liquid surface — it looked like a real film frame. Generation time was 47 seconds. The downside: the coffee cup visibly distorts near the end of the clip — a classic AI artifact.

Sora 2 stats:

- Generation time: 47 seconds

- Resolution: 1080p (default)

- Duration: 5 seconds

- Frame rate: 24fps

- Strengths: Lighting quality, depth of field

- Weakness: Object distortion toward the end

Source: Manus AI Video Generator Comparison | Google Veo 3.1 interface

Veo 3.1 Result

Veo 3.1 scores on consistency. Object coherence across the full clip was significantly better, and 4K output is the default — a clear resolution advantage. That said, the "cinematic feel" just wasn't quite there. Technically correct, aesthetically safe. It's the difference between technically excellent and genuinely evocative.

Veo 3.1 stats:

- Generation time: 32 seconds

- Resolution: 4K (default)

- Duration: 5 seconds

- Frame rate: 30fps

- Strengths: 4K default, object consistency

- Weakness: Less cinematic atmosphere

Prompt 2: Landscape Video

The landscape prompt revealed the sharpest differences.

Sora 2's Han River shot was stunning — light fracturing across the water surface was nearly indistinguishable from real drone footage. But Seoul's skyline included a building that doesn't exist. A phantom tower appeared next to 63 Building. Hallucination in video form.

Veo 3.1 had more accurate building geometry, but water rendering didn't hit the same level of realism as Sora 2. What impressed me most was the automatic audio — water sounds and wind noise were generated contextually, without any extra prompting. As Google's official blog (February 2026) highlighted, this "contextual audio awareness" is a genuine differentiator.

| Comparison | Sora 2 | Veo 3.1 |

|---|---|---|

| Natural elements (water, sky) | ★★★★★ | ★★★★☆ |

| Building / object accuracy | ★★★☆☆ | ★★★★★ |

| Auto audio generation | ❌ (manual) | ✅ (context-aware) |

| Max resolution | 1080p | 4K |

| Color grading | Cinematic tone | Natural palette |

Prompt 3: Explainer / Code Video

This one surprised me.

Sora 2's coding scene looked incredible visually — but the code on screen was complete gibberish. Something like function asdkjf(){ return 42/0; }. Aesthetic score: 9/10. Accuracy score: 2/10. A bit deflating.

Veo 3.1 also generated meaningless code, but the screen layout looked far more like VS Code — correct editor chrome, realistic cursor blinking, proper syntax highlighting regions. Still nonsense content, but better visual verisimilitude. The honest conclusion: AI video generators aren't ready to fake "actual coding" convincingly yet.

Source: Manus AI Video Generator Comparison | Top AI video generation tools in 2026

The Real Advantage of CapCut Integration

Here's a workflow insight that doesn't appear in the official docs. The biggest win with CapCut integration isn't model quality — it's dropping generated clips directly into your timeline without leaving the app.

Old workflow: AI video generation (Sora / Runway) → Download → Import into Premiere Pro / DaVinci → Edit → Export Typical time: ~30–40 minutes per 5-second clip

New workflow: Generate inside CapCut → Place on timeline → Edit → Export Typical time: ~10–15 minutes per 5-second clip

I made a 15-second YouTube Shorts clip in 8 minutes total. That same clip would have taken 30 minutes before.

This parallels what's happening across the AI tool landscape more broadly — just as MCP connects AI agents to external tools, video generation tools integrating with editing tools is the same "connected AI" trend playing out in the creative space.

Pricing (as of March 2026)

| Item | Sora 2 (via ChatGPT Plus) | Veo 3.1 (via Google AI Premium) | CapCut Pro |

|---|---|---|---|

| Monthly subscription | $20 | $19.99 | ₩11,900 |

| Monthly generation limit | ~50 videos (5 sec) | ~100 videos (5 sec) | Unlimited (AI gen separate) |

| Extra credits | $0.40/video | $0.20/video | — |

| Max video length | 20 seconds | 30 seconds | — |

| 4K output | Extra credits | Included | — |

On pure value, Veo 3.1 wins: similar price, double the generation limit, 4K included. But if visual quality is paramount, Sora 2's cinematic rendering is still in a class of its own.

What Surprised Me

My hypothesis going in was "Sora 2 wins across the board." OpenAI got there first, after all. The reality was more nuanced.

Where Veo 3.1 outperformed expectations:

- 4K output quality and stability

- Automatic audio generation (genuine game-changer)

- Object consistency across frames (far fewer hallucinations)

- Generation speed (~30% faster on average)

Where Sora 2 still leads:

- Cinematic aesthetics (color, lighting, depth of field)

- Natural element rendering (water, fog, light)

- Prompt comprehension for abstract or expressive direction

As NVIDIA's blog (January 2026) noted, local 4K AI video generation is now viable — the LTX-2 model on an RTX GPU can generate without cloud dependency. That's a topic for a separate post.

When to Use Which

Use Sora 2 when:

- Aesthetic quality is non-negotiable (brand films, fashion, food)

- You need emotional, expressive content

- A single cinematic shot is the deliverable

Use Veo 3.1 when:

- Volume matters — YouTube Shorts, TikTok, high-frequency content

- 4K resolution is required

- You want audio included in a single pass

- Budget is a consideration (2× generation limit)

Use CapCut as your hub when:

- You want to generate from both models and pick the best result

- You need a seamless generate → edit → publish workflow

Final Thoughts

A year ago, AI video generation was a novelty. Today it's a tool worth building into your actual workflow. CapCut's integration has made the barrier to entry real — not just technical.

I'll be using this combination for blog thumbnails, YouTube Shorts, and short promos going forward. I'll share a follow-up once I've used it in production for a month.

Are you already using AI video tools in your work, or still watching from the sidelines?

References:

- Google Blog - Flow updates: February 2026

- Zapier - The 18 best AI video generators in 2026

- NVIDIA Blog - RTX Accelerates 4K AI Video Generation

- MIT Technology Review - 10 Breakthrough Technologies 2026

Related posts:

- MCP (Model Context Protocol): Connecting AI Agents in 2026 - The standard driving AI tool interoperability