Have you ever wished that Claude or ChatGPT could directly query your database? Or that your AI could read Slack messages and automatically file GitHub issues? The dream is obvious. The reality has always been that each integration requires custom API code, different authentication schemes, and incompatible data formats — endless friction.

Photo by 2H Media on Unsplash | A standard for connecting AI to external tools has arrived

MCP (Model Context Protocol) solves exactly this problem. Anthropic announced this open protocol in November 2024, and it has since been adopted by OpenAI, Microsoft, and Google — effectively becoming the USB port of the AI agent world. This guide covers the full process of building an MCP server in Python and connecting it to Claude Desktop.

TL;DR

MCP is an open protocol for connecting AI apps to external data sources and tools in a standardized way. Using the Python FastMCP library, you can create a custom tool server with a few decorators and register it in Claude Desktop's config file — and it just works.

What Is MCP? The USB-C Analogy

MCP is, in essence, "USB-C for AI." As Anthropic's official blog describes it: instead of building a separate connector for every data source, you connect everything through one standard protocol. (Anthropic, November 2024)

Here's how the old approach compares to MCP:

| Item | Old Approach | MCP Approach |

|---|---|---|

| Connection method | Custom API code for each tool | Single standard protocol |

| Authentication | Separate OAuth, API keys, etc. | Handled by MCP framework |

| Reusability | Re-implement for every app | Build once, use across multiple AI apps |

| Ecosystem | Closed/proprietary | Open source, community-shared servers |

MCP servers can expose three types of capabilities:

- Resources: Read-only data like files or API responses

- Tools: Functions the LLM can call (with user approval)

- Prompts: Pre-defined templates for specific tasks

Tools are the most commonly used and are the focus of today's walkthrough. Per the MCP official docs, a single server can serve multiple clients (Claude Desktop, custom apps, etc.) simultaneously. (March 2026)

Prerequisites: Environment Setup

What you'll need:

| Item | Version / Requirement |

|---|---|

| Python | 3.10+ |

| uv (package manager) | Latest |

| Claude Desktop | Latest (free plan works) |

| OS | macOS, Windows, or Linux |

# Install uv if you don't have it

curl -LsSf https://astral.sh/uv/install.sh | sh

# Initialize the project

mkdir mcp-demo && cd mcp-demo

uv init

uv add "mcp[cli]" httpx

# Create the server file

touch server.py

A note on uv: if you haven't used it before, it's significantly faster than pip and handles virtual environments cleanly. The MCP documentation defaults to it, which prompted me to switch — and dependency installs feel about 5× faster.

Step 1: Build Your First MCP Server

Let's build a simple MCP server that fetches weather data. This is based on the MCP official tutorial, adapted slightly for a real-world shape.

# server.py

from mcp.server.fastmcp import FastMCP

import httpx

# Create FastMCP server instance

mcp = FastMCP("weather-demo")

# US National Weather Service API

NWS_API = "https://api.weather.gov"

HEADERS = {"User-Agent": "mcp-weather-demo/1.0"}

@mcp.tool()

async def get_forecast(latitude: float, longitude: float) -> str:

"""Fetches weather forecast for a given coordinate.

Args:

latitude: Latitude (e.g., 37.5665 for Seoul)

longitude: Longitude (e.g., 126.9780 for Seoul)

"""

async with httpx.AsyncClient() as client:

# Step 1: Get grid point from coordinates

points_url = f"{NWS_API}/points/{latitude},{longitude}"

resp = await client.get(points_url, headers=HEADERS)

if resp.status_code != 200:

return f"Grid lookup failed: {resp.status_code}"

forecast_url = resp.json()["properties"]["forecast"]

# Step 2: Fetch forecast data

forecast_resp = await client.get(forecast_url, headers=HEADERS)

periods = forecast_resp.json()["properties"]["periods"]

# Return first 3 periods

result = []

for p in periods[:3]:

result.append(

f" {p['name']}: {p['temperature']}°{p['temperatureUnit']}\n"

f" {p['detailedForecast']}"

)

return "\n\n".join(result)

@mcp.tool()

async def get_alerts(state: str) -> str:

"""Fetches active weather alerts for a US state.

Args:

state: US state abbreviation (e.g., CA, NY, TX)

"""

async with httpx.AsyncClient() as client:

url = f"{NWS_API}/alerts/active?area={state}"

resp = await client.get(url, headers=HEADERS)

alerts = resp.json().get("features", [])

if not alerts:

return f"No active alerts for {state}."

result = []

for alert in alerts[:5]:

props = alert["properties"]

result.append(

f"⚠️ {props['event']}\n"

f" Severity: {props['severity']}\n"

f" {props['headline']}"

)

return "\n\n".join(result)

if __name__ == "__main__":

mcp.run(transport="stdio")

The key here is the @mcp.tool() decorator. FastMCP reads the function's type hints and docstring and automatically generates the tool schema — no manual schema definition needed. In the TypeScript SDK you'd write Zod schemas by hand; Python handles it automatically.

One tip that's not obvious from the docs: write your Args section in Google-style docstring format and FastMCP will extract parameter descriptions automatically.

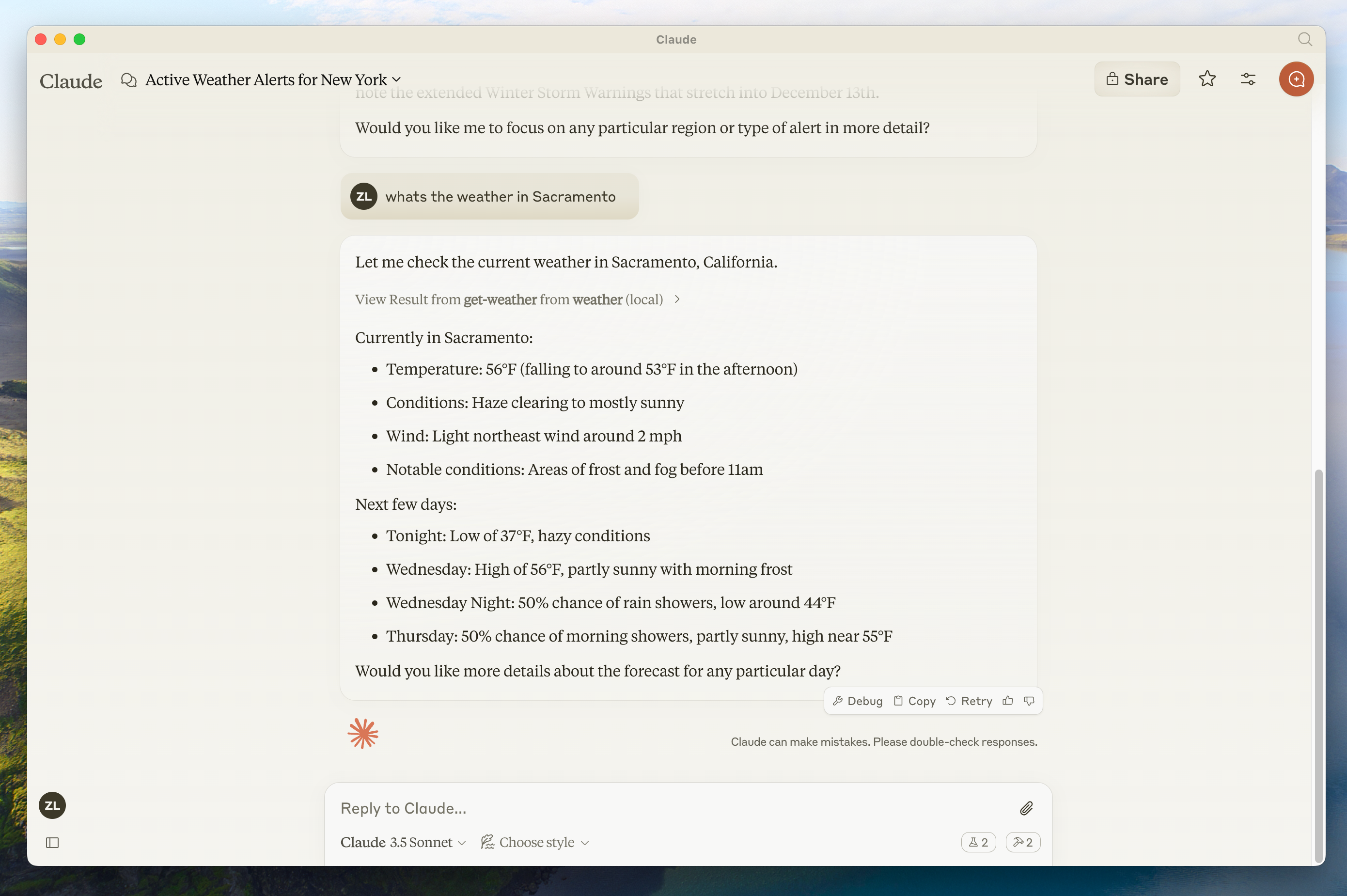

Source: MCP Official Docs | Weather tool running in Claude Desktop via MCP

Step 2: Connect to Claude Desktop

Now register the server with Claude Desktop. Open the config file (macOS):

code ~/Library/Application\ Support/Claude/claude_desktop_config.json

Add this:

{

"mcpServers": {

"weather-demo": {

"command": "uv",

"args": [

"--directory",

"/Users/yourname/mcp-demo",

"run",

"server.py"

]

}

}

}

Replace the directory path with your actual project path. (I forgot to do this the first time and got a "server connection failed" error — don't skip it.)

Save the file and restart Claude Desktop. A hammer icon will appear next to the chat input. Click it and you'll see get_forecast and get_alerts listed as available tools.

Per Stainless's MCP SDK comparison, the Python SDK is "best for rapid prototyping" while TypeScript is "more robust for production deployment." For side projects and internal tools, Python is more than sufficient. (March 2026)

Step 3: Debug with MCP Inspector

Before connecting to Claude Desktop, test your server with MCP Inspector first:

# Run MCP Inspector

npx @modelcontextprotocol/inspector uv run server.py

The Inspector opens a browser UI where you can view registered tools and invoke them directly. This saved me considerable debugging time — you can see exactly what schema the server is exposing and what responses it returns in real time.

Photo by Bernd Dittrich on Unsplash | Building MCP servers feels like normal Python development

Common Errors and Fixes

1. "Server disconnected" error

The most common cause is a path error in claude_desktop_config.json. Specifying the full path to uv usually resolves it:

# Find the full path

which uv

# e.g., /Users/yourname/.local/bin/uv

Replace "command": "uv" with the full path.

2. "Tool not found" — tools aren't showing up

If the @mcp.tool() decorator is applied but the tool isn't visible, check whether you used def instead of async def. FastMCP supports both, but tools involving HTTP calls are more reliable as async def.

3. Timeout errors

If external API calls are slow, they can hit MCP's default 30-second timeout. Increase it explicitly:

httpx.AsyncClient(timeout=60.0)

I lost 30 minutes to the NWS API being intermittently slow — adding the explicit timeout fixed it.

Why MCP Became the Standard

According to Wikipedia's MCP article, as of March 2026, OpenAI, Microsoft, and Google have all adopted MCP in their AI platforms. Anthropic created it, but it's no longer just Anthropic's protocol.

The GitHub MCP organization now hosts official SDKs for Python, TypeScript, and Go, plus hundreds of community-built MCP servers. Slack, GitHub, PostgreSQL, Google Drive — most services you'd want to connect already have someone's MCP server implementation.

| SDK | Strengths | Best For |

|---|---|---|

| Python (FastMCP) | Fast development with decorators, automatic type inference | Prototyping, data analysis tools |

| TypeScript | Strict typing, production stability | Web service integration, large-scale deployment |

| Go | High performance, low memory footprint | Infrastructure tools, high-volume processing |

Summary + Next Steps

MCP is foundational infrastructure for the AI agent era. It's worth building familiarity with it now.

Key takeaways:

- FastMCP + Python: A custom server takes about 30 minutes

- Claude Desktop connection: One JSON config block

- MCP Inspector: Essential for debugging

- Ecosystem: Hundreds of community servers already available

Photo by Ecliptic Graphic on Unsplash | MCP is becoming the connectivity standard for the AI ecosystem

Next up: building a genuinely useful MCP server — one that lets you query an internal PostgreSQL database with natural language. I'll also cover advanced features from Anthropic's MCP advanced course, including sampling and notifications.

References:

- Introducing the Model Context Protocol | Anthropic Official Blog (November 2024)

- Build an MCP Server | MCP Official Docs (March 2026)

- MCP SDK Comparison | Stainless (March 2026)

- Model Context Protocol | Wikipedia (March 2026)

- MCP GitHub Organization (March 2026)

Related posts:

- GPT-5.4 Practical Guide: Fact-Checking Workflow with 33% Fewer Hallucinations - Connect fact-checking APIs via MCP to make this even more powerful